We are basing our code off of the sample provided here: https://doc.developer.milestonesys.com/mipsdkmobile/index.html?base=samples/web_audiosample.html&tree=tree_5.html.

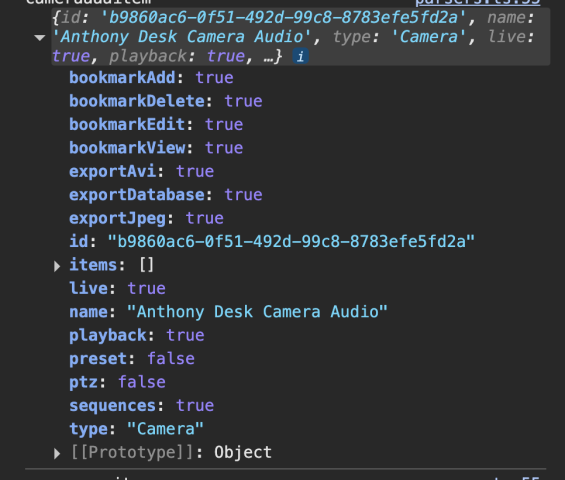

Below is a screenshot of the console log of the camera object, the camera has the capability for audio streaming.

Hi Michael,

Could you please provide information on:

- Which version of the Mobile Server are you using?

- Which operating system is your sample running?

- Have you checked if the codecs of the audio/speaker/microphone are setup appropriately to what your device provides?

Thank you

Hi again,

Thanks for providing these details. Based on the system and reviewing the code sample we provide we don’t expect that you have issues running the sample or using the ideas provided there.

In your own code make sure that you are calling both: requestStream (for video) and requestAudioStream (for audio).

Also, check if there is only one stream to play at a time per audio, otherwise that may result to errors or conflicts.

We are making the same requests as a Milestone sample, but we are getting different requests. I am unable to attach more than one file, but attached is the result of our latest attempt.

Hi Michael,

I tried to reproduce what you mentioned and ran the audioSample. I observed that the calls requestStream and requestAudioStream being sent as expected.

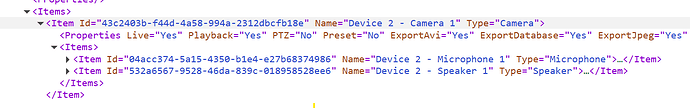

Then, I realized you might actually be looking to detect the type of item — for instance, instead of just seeing type= “Camera” you want to catch when it says type=“Microphone” or type=“Speakers”.

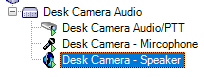

To do that I would suggest using the method called getAllViewsAndCameras. This will give you all your views and cameras which will include the types. See the picture here.

In the audioSample you could comment out the code in the function removeCameraWithoutAudio and this will allow you to retrieve all devices cameras, microphones, speakers and then develop on top of it.

Is this what you are trying to do? if not, please provide with some more input. I’d be happy to help

Hi @michael fianko

I would like to know whether this answer was this helpful to you. Or are you searching for something different?

HI @Constantina Ioannou

We were able to test the “getAllViewsAndCameras” method and there was no difference, the response data does not have what we need.

Hi again,

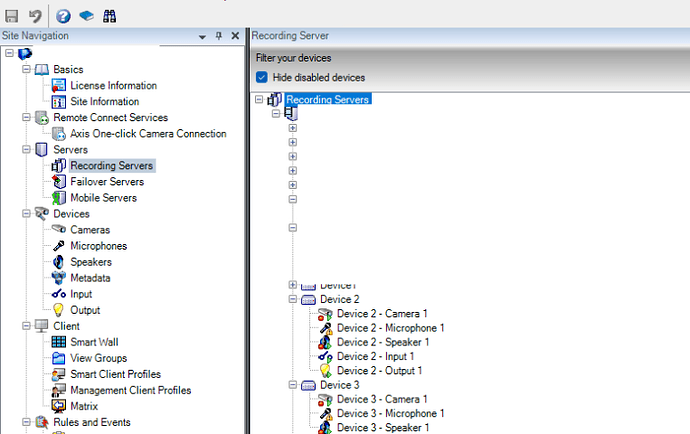

Maybe you don’t see similar output, as your Management Client may have a different setup for those cameras/microphones/speakers. In my example, where I show the output of the getAllViewsAndCameras I have the following setup on my Management Client:

I’d appreciate any further details that could help me better understand your problem

Good Morning,

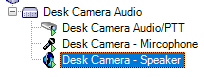

This is what we have on the management client, we have also confirmed audio streaming and PTT work on the Milestone web client. Just unable to get those functions to work when calling from the SDK.

Hi @michael fianko

I am not able to reproduce what you see, and calling the methods I have mentioned from the SDK should provide you with the information you need. Is there any other information, and/or maybe part of your code that you can provide?

I believe we have found a resolution to this issue. we needed to add a couple of parameters that weren’t in the docs.

{

SupportsAudioIn: “Yes”,

SupportsAudioOut: “Yes”,

}